Innovation is the cornerstone of progress at ECC, staying true to this belief, the Service Transformation team recently hosted a hackathon to explore the possibilities for AI in public services and the tools we can use.

The event began with a quick run through of the day’s schedule and rules to be followed, after which we kicked off in true unconference style. We had a myriad of great ideas pitched by the group which comprised of individuals from the field of Research, Data and Analytics, Technology, Transformation and Information Governance.

You can find out more from Vicki James, our Head of Design Operations, who recently wrote about how hosting an AI hack day.

Our Team

Amongst the ideas, six of us were particularly intrigued by Jordan’s suggestion of using AI to simplify website content for users. This hit a nerve with us because every user sits in a different persona and is visiting the essex.gov website to access information of varying complexity. Jordan’s suggestion was to take this uncertainty out of the equation for the end user.

So, the six of us, attempted to unbox this further and began by listing out the problems and the user groups we wish to solve for. This “Product” approach would help us to prioritise in the order of the most impactful and feasible and achievable piece of work.

After a few rounds of discussion, we all agreed that we wanted to address the issue of simplifying ‘large pieces of text’ on the website using AI.

Our selection was reaffirmed by an important piece of information shared by Charlie and Nick - the national reading age is 9 years old, which means there is an opportunity to solve a problem that many of our users face.

The Feasibility conundrum

As the day progressed and we began assessing potential solutions for simplifying content, we soon opened a pandora’s box synonymous with AI, more so in the public services space.

Cost Vs Value

The cost of using a tool like ChatGPT could quickly pile up over time as the pricing is directly proportional to the quantity of content being translated. We would need to assess content across all pages to identify what would benefit from being translated. Plus, if translated content repeatedly fails quality checks, there could potentially be an associated cost in training content creators in using the AI.

Another important consideration is the correct translation of legal content and other critical content on the website.

It’s also need to understand what “amount” of text we can feed the AI and still maintain context. Our team observed better results when the AI was prompted with smaller pieces of text as opposed to large ones.

We agreed that it would be important to have metrics that would keep our ambition in check and weigh the impact expected vs costs involved.

Success Metrics

If you can’t measure it, you can’t improve it

The evidence is in the data. We decided that there will be 3 primary metrics that our solution would impact, and a positive change would corelate to the success of the solution.

- Reduce Bounce Rate: A reduction in the bounce rate is expected, especially from the pages which are heavy on content and have a lot of text to consume for the user, we expect to reduce the user drop offs, this can be tracked from Google Analytics

- Tool adoption: Also, from Google Analytics we would track the tool adoption i.e., the number of clicks on the simplify functionality, an increased adoption of the tool will be an indicator of success.

- Higher Engagement rate: Coupled with a reduction to the bounce rate, The average session time can also be an indication of how engaged a user is with the website, also tools like Medallia that capture user engagement are available in the market.

Yes, we built a Prototype in a day!

Our team was not happy just creating an idea, always wanting to go the extra mile, we decided that we would build a working prototype. This would also unequivocally drive home the idea that AI is already here (for any doubting minds).

Armed with our laptops, internet, and collective tech expertise, we created a webpage for ‘Everyone’s Essex’ that would mimic the problem statement and prototype our solution.

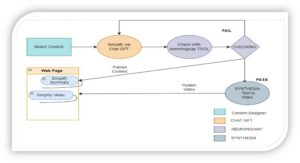

Our prototype would use the power of Open AI’s ChatGPT for language simplification, a video AI tool called SYNTHESIA that uses an avatar to read out the simplified text, and Hemmingway, which analyses readability and quality checks text.

What we learnt

One of the most remarkable aspects of the AI hackathon was the synergy it created between people from different disciplines, it sparked vibrant discussions where we shared insights, swapped ideas, and supported each other.

It helped create new connections that continue; our group chat still breathes with an occasional meme and not just the shared desire to solve real world problems through AI.

A few other learnings we had from the day are below.

- There is a trade-off between prompting for simplification versus summarisation.

- ChatGPT is very sensitive to both the amount of text you feed it and the prompt you provide it.

- Video generation is not 100%, but heyGEN is the best we found for free and quick to implement.

- Real time simplification and video generation on a use-by-use basis would be expensive.

The day was packed full of learning, and these are the findings just from our group. Keep your eyes peeled for blog posts from other groups that were there on the day.

Leave a comment