I recently took part in design activity around the Essex website alpha and Futures Academy. In both cases we did some initial user research, then analysed our findings to generate insights and ideas for potential solutions.

This work has made me reflect about risk. More specifically, how the purposeful introduction of risk within the design process can help create better solutions in a way that established ways of working do not.

Reframing risk

As a recovering analyst and cheerleader for data based decision-making, I felt quite uneasy about the intuitive leaps from initial user research findings to the generation of new ideas. After all, these were based on a relatively small number of observations, behaviours and quotes. What if our thinking wasn’t representative? What if we make things worse? What if we waste precious time and effort creating something that just doesn’t work? Don’t we need more data? Where are all the stats? It all felt very...risky.

We think and talk about risk all the time. From the perpetual awareness of working in a political environment, to the recent avalanche of GDPR warnings, thoughts of risk are never far away. That these things are normally framed as damage limitation or make you think about worst-case scenarios doesn’t generally lead to creative thinking! But here we are, coming up with ideas for how to make things better based on some things that a handful of people in and around our service said or did.

Purposeful and necessary

It was then that the penny dropped. The act of interpreting the things we saw and heard, from a small sample, into insights and ideas did introduce risk, but that was a purposeful and necessary part of the design process.

By focusing on the softer, more human things people said and did rather than hard numbers or statistics we were thinking much more creatively and empathetically about how we might improve things. We were much more willing to explore around the edges of the problem, and to take a leap into the unknown with our potential solutions. And we were able to challenge assumptions around the root cause of the issue - maybe what we think is causing the issues is wrong as well, and most importantly, how might we test this?

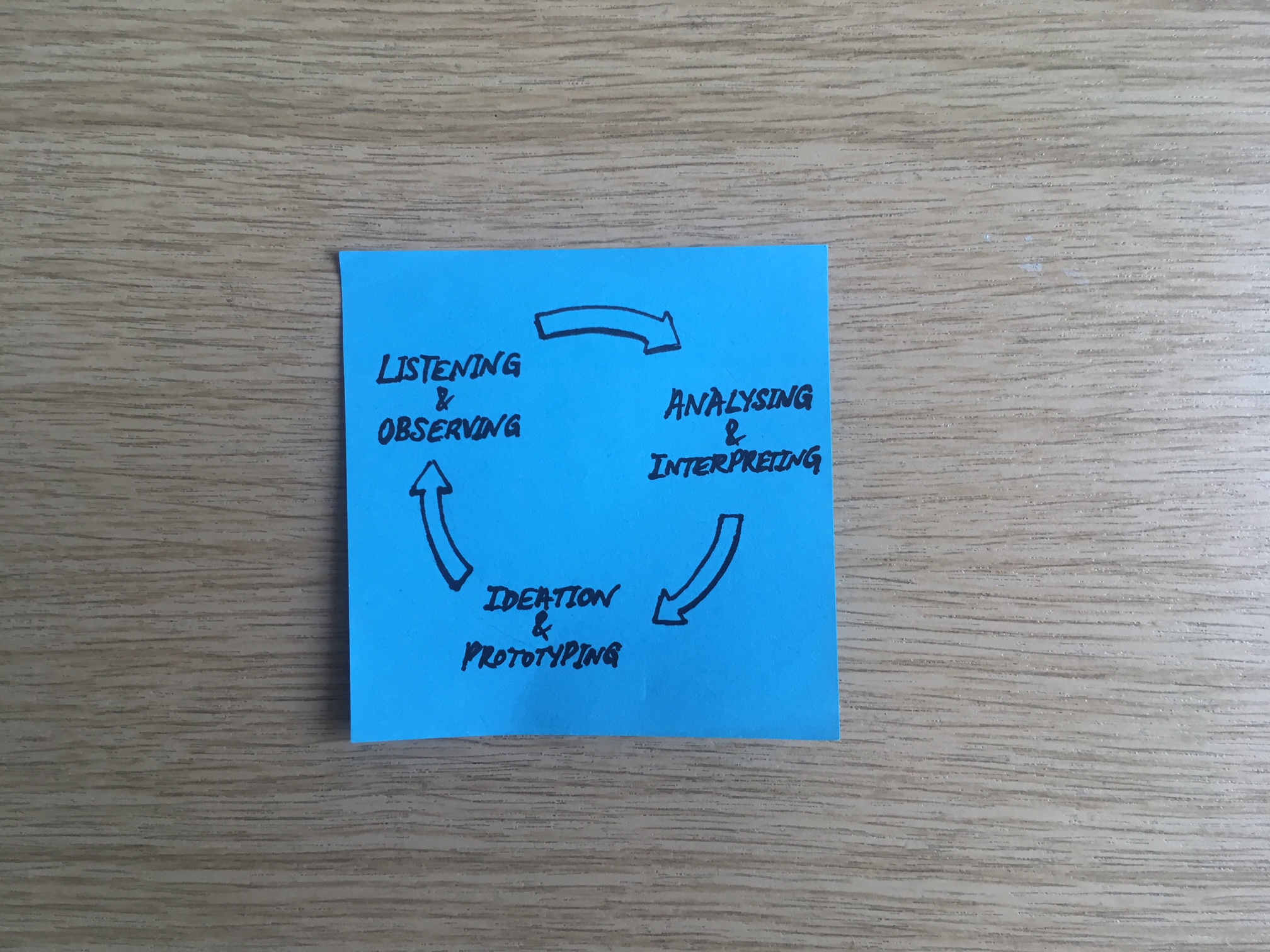

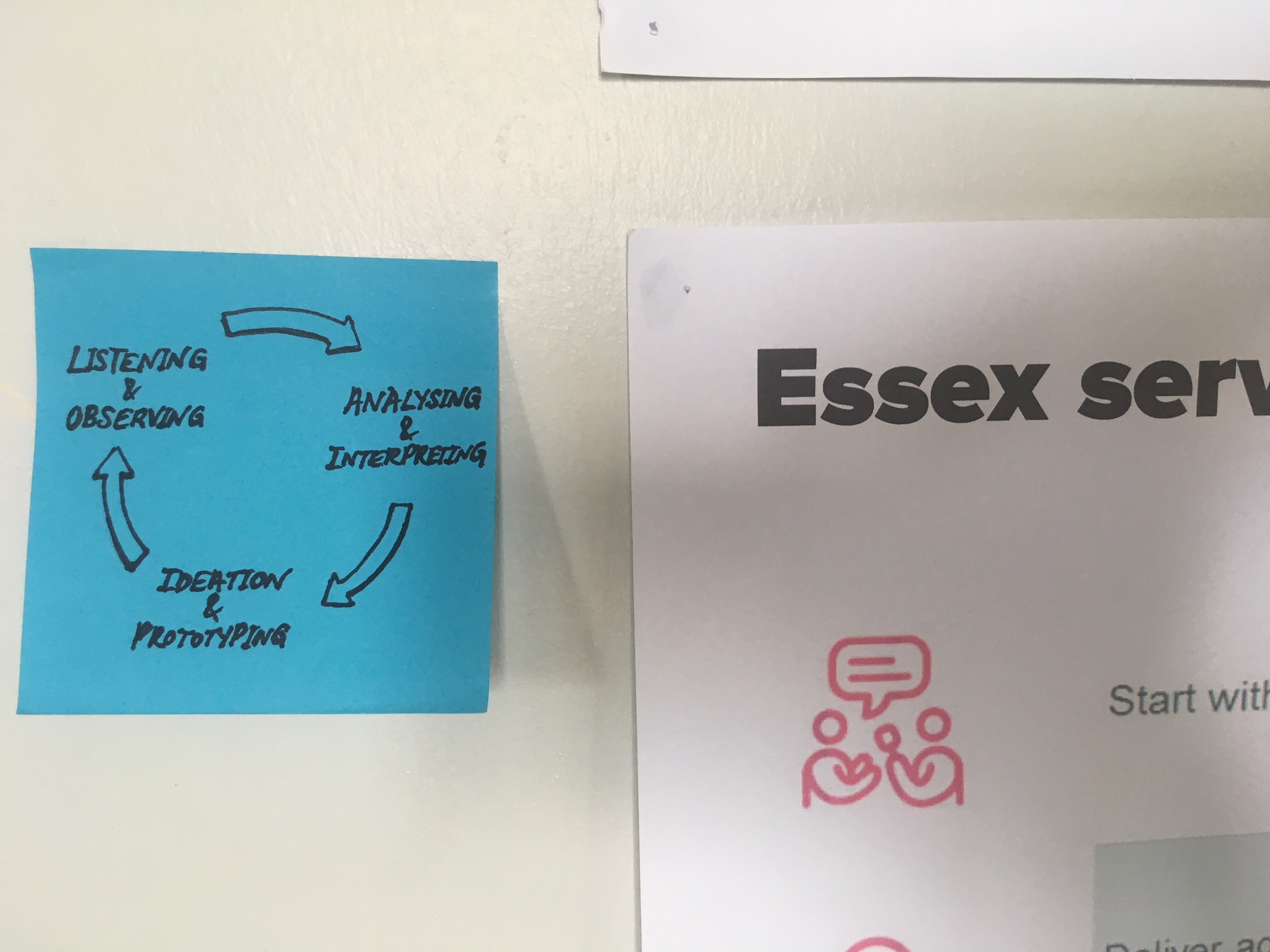

Of course the introduction of risk needs to be managed, whether it’s a purposeful part of the process or not - and we do this with regular testing of our progress with users. The quick and repeated cycle of listening and observing, analysing and interpreting, ideation and prototyping and back to testing, listening and observing ensures that our assumptions are right, or if not, points us in the direction of where we might go next. And all the while we’re learning and opening our minds to new ways of solving old problems.

It’s been a really interesting process, and this reconsideration of risk is definitely one of my key takeaways from the recent work with colleagues as part of the Futures Academy. It’s got me thinking differently about risk, and how important it is for us to develop new ways of thinking to encourage creativity and nurture new ideas. And it’s certainly something I’m going to test more as we continue to develop our service design roadmap.

Leave a comment